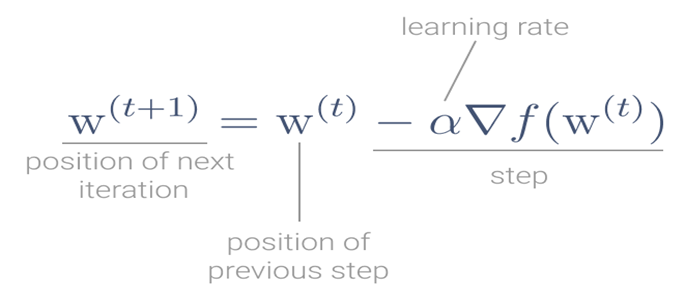

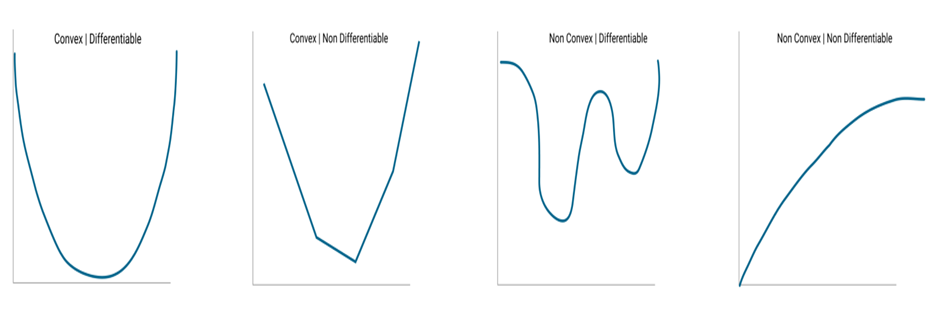

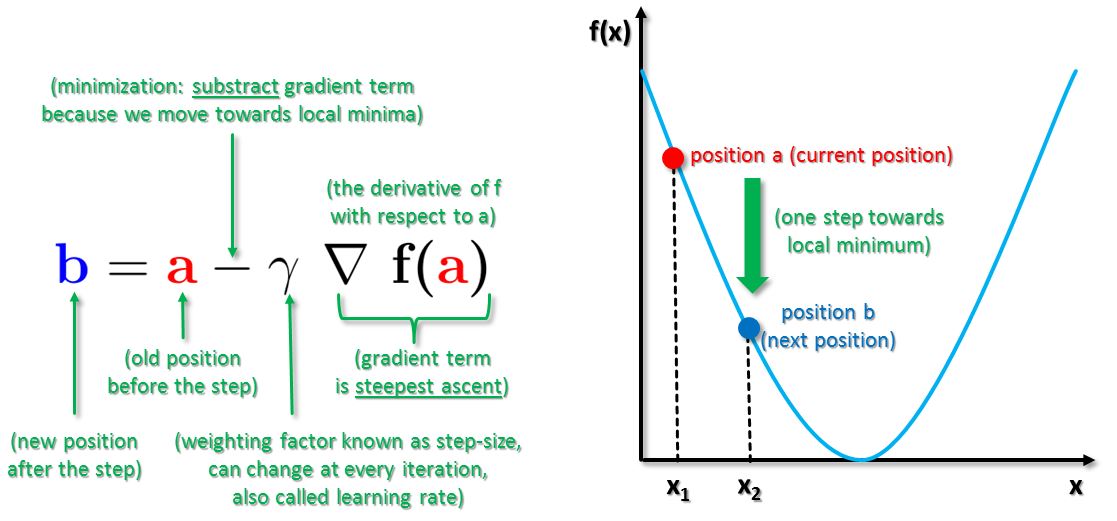

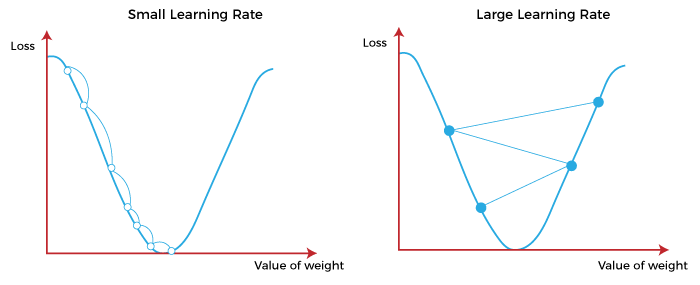

MathType - The #Gradient descent is an iterative optimization #algorithm for finding local minimums of multivariate functions. At each step, the algorithm moves in the inverse direction of the gradient, consequently reducing

Por um escritor misterioso

Last updated 22 dezembro 2024

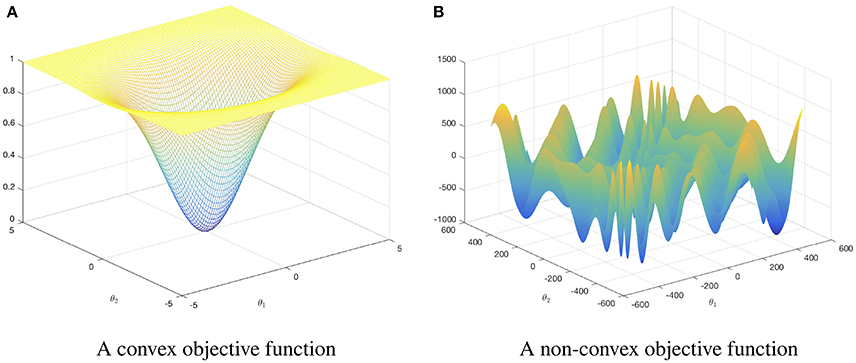

Optimization Techniques used in Classical Machine Learning ft: Gradient Descent, by Manoj Hegde

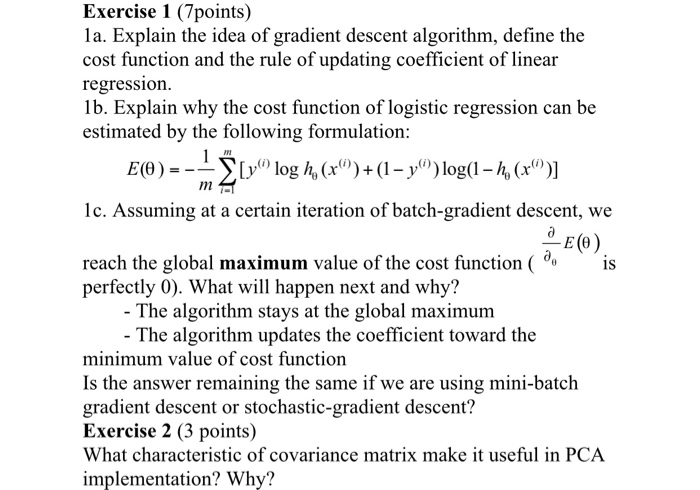

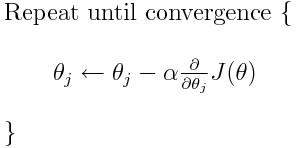

Solved Exercise 1 (7points) 1a. Explain the idea of gradient

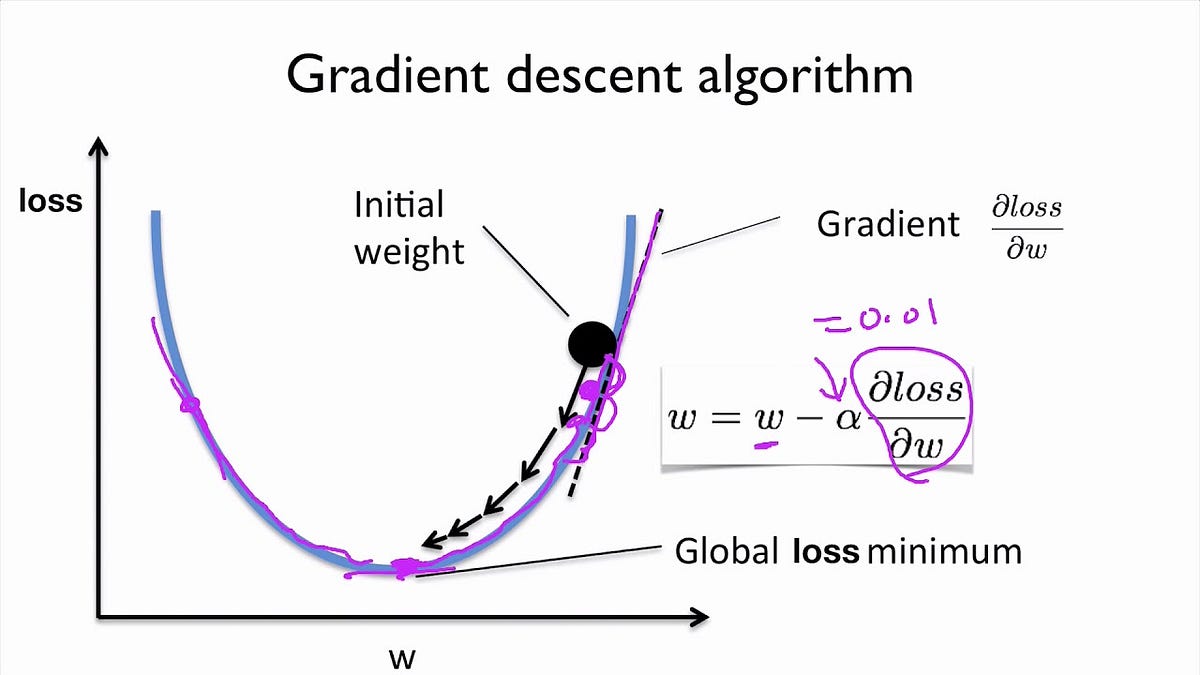

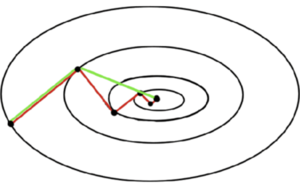

Gradient Descent Algorithm

Solved] . 4. Gradient descent is a first—order iterative optimisation

Gradient Descent algorithm. How to find the minimum of a function…, by Raghunath D

MathType - The #Gradient descent is an iterative optimization #algorithm for finding local minimums of multivariate functions. At each step, the algorithm moves in the inverse direction of the gradient, consequently reducing

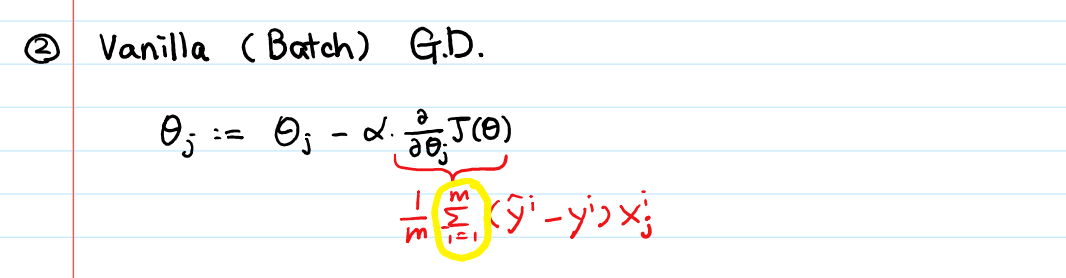

Optimization Techniques used in Classical Machine Learning ft: Gradient Descent, by Manoj Hegde

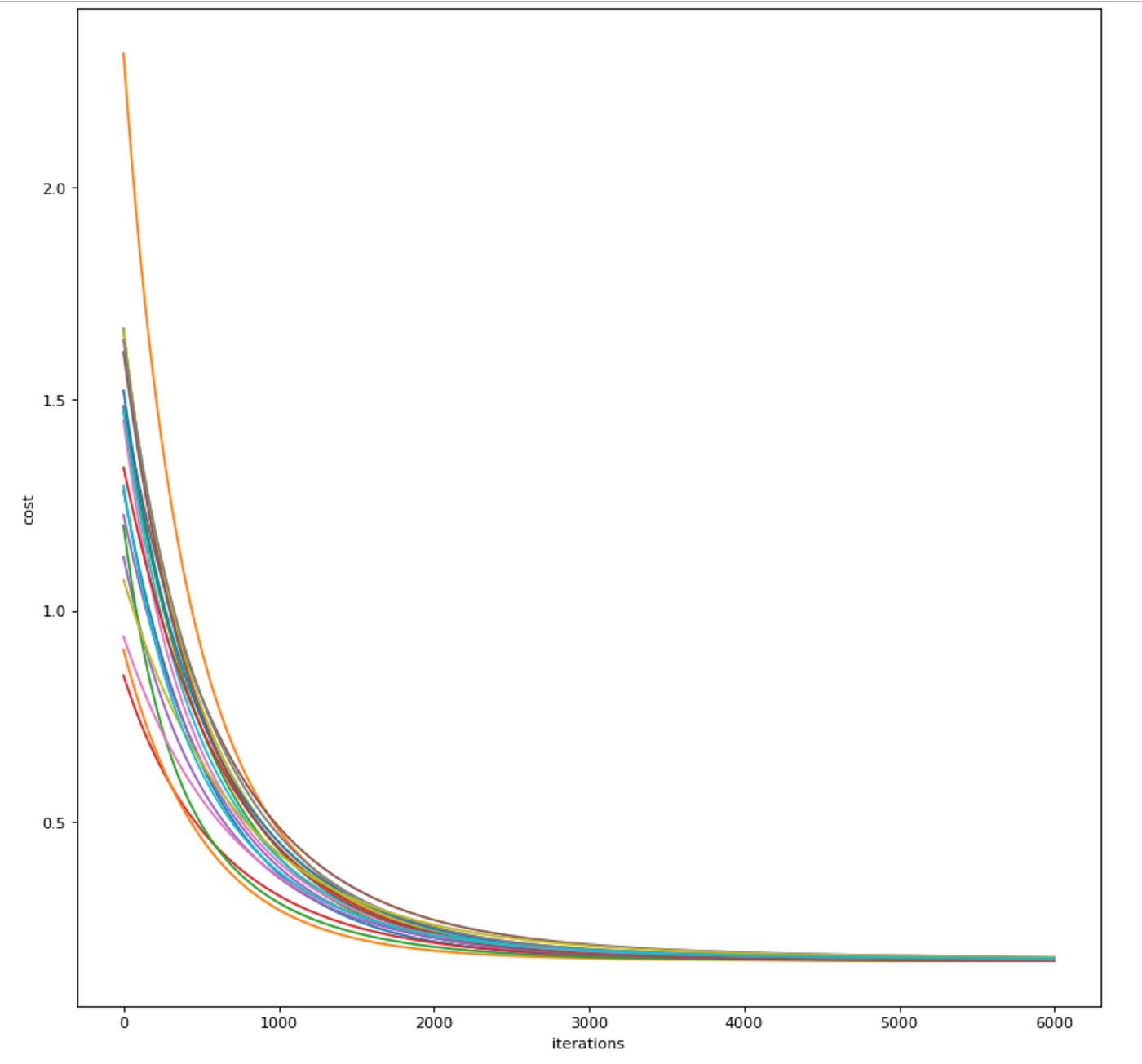

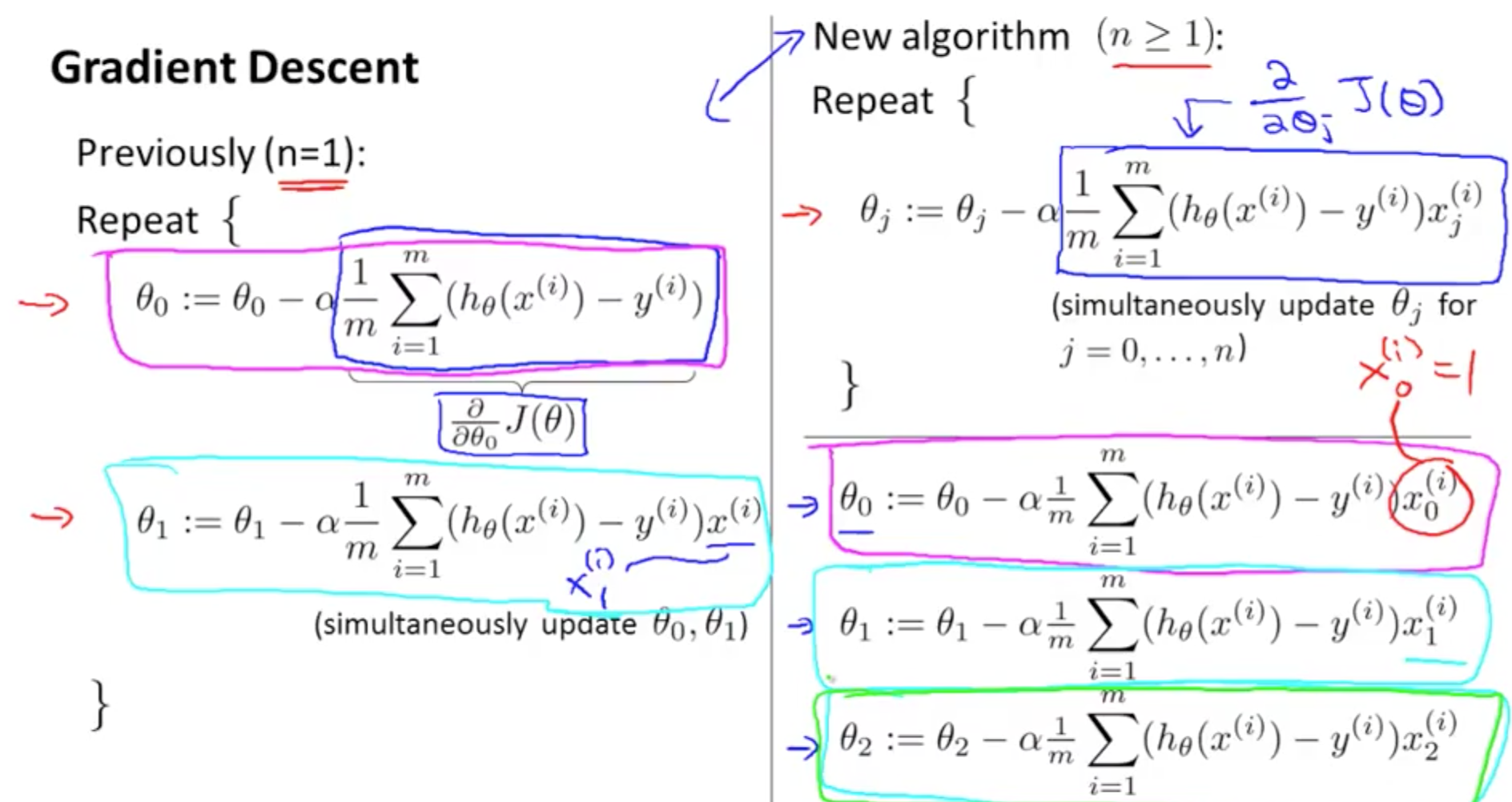

Linear Regression From Scratch PT2: The Gradient Descent Algorithm, by Aminah Mardiyyah Rufai, Nerd For Tech

Gradient Descent algorithm. How to find the minimum of a function…, by Raghunath D

Gradient Descent Algorithm

Gradient Descent algorithm. How to find the minimum of a function…, by Raghunath D

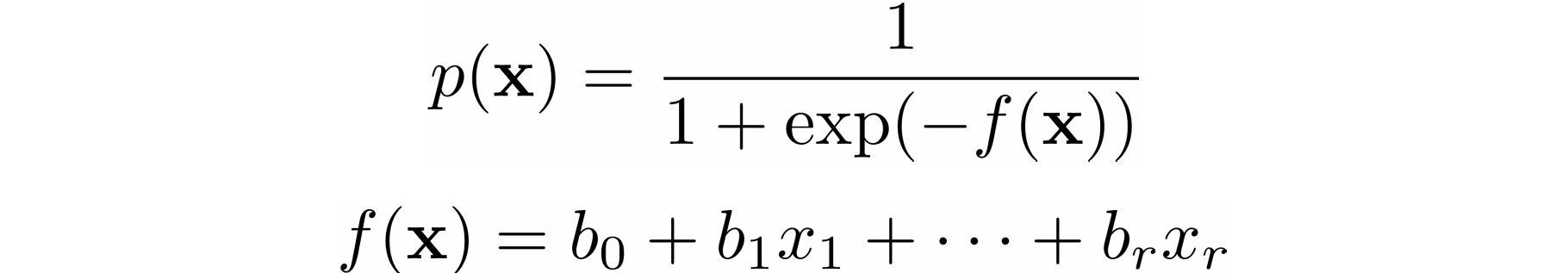

Linear Regression with Multiple Variables Machine Learning, Deep Learning, and Computer Vision

Optimization Techniques used in Classical Machine Learning ft: Gradient Descent, by Manoj Hegde

Solved] . 4. Gradient descent is a first—order iterative optimisation

Can gradient descent be used to find minima and maxima of functions? If not, then why not? - Quora

Recomendado para você

-

Method of steepest descent - Wikipedia22 dezembro 2024

-

Steepest Descent Method - an overview22 dezembro 2024

Steepest Descent Method - an overview22 dezembro 2024 -

Gradient Descent Algorithm in Machine Learning - Analytics Vidhya22 dezembro 2024

-

Conjugate gradient methods - Cornell University Computational Optimization Open Textbook - Optimization Wiki22 dezembro 2024

Conjugate gradient methods - Cornell University Computational Optimization Open Textbook - Optimization Wiki22 dezembro 2024 -

5.5.3.1.1. Single response: Path of steepest ascent22 dezembro 2024

5.5.3.1.1. Single response: Path of steepest ascent22 dezembro 2024 -

PDF) Steepest Descent juan meza22 dezembro 2024

PDF) Steepest Descent juan meza22 dezembro 2024 -

Steepest descent method in sc22 dezembro 2024

Steepest descent method in sc22 dezembro 2024 -

Gradient Descent Big Data Mining & Machine Learning22 dezembro 2024

Gradient Descent Big Data Mining & Machine Learning22 dezembro 2024 -

Gradient Descent in Machine Learning - Javatpoint22 dezembro 2024

Gradient Descent in Machine Learning - Javatpoint22 dezembro 2024 -

Stochastic Gradient Descent Algorithm With Python and NumPy – Real Python22 dezembro 2024

Stochastic Gradient Descent Algorithm With Python and NumPy – Real Python22 dezembro 2024

você pode gostar

-

Madonna triggering your senses download music22 dezembro 2024

-

ps4 roblox out|Ricerca TikTok22 dezembro 2024

ps4 roblox out|Ricerca TikTok22 dezembro 2024 -

Receita de Frango xadrez, DeliRec, Receita em 202322 dezembro 2024

Receita de Frango xadrez, DeliRec, Receita em 202322 dezembro 2024 -

Garten Of Banban Plush Toys, Jumbo Josh Plushies Toys, Figuras Macias De Animais Recheadas Para CriançAs E Adultos. (Laranja) em Promoção na Americanas22 dezembro 2024

Garten Of Banban Plush Toys, Jumbo Josh Plushies Toys, Figuras Macias De Animais Recheadas Para CriançAs E Adultos. (Laranja) em Promoção na Americanas22 dezembro 2024 -

![Spider-Man: Blue] Jeph Loeb and Tim Sale produced one of the most human and beautiful stories of all time in Spider-Man: Blue. With Tim Sale's passing today, if you are to read](https://preview.redd.it/pfvq43x3b1691.jpg?width=1468&format=pjpg&auto=webp&s=1f6b0b581efae91c8e37b97df217ff61a83745e6) Spider-Man: Blue] Jeph Loeb and Tim Sale produced one of the most human and beautiful stories of all time in Spider-Man: Blue. With Tim Sale's passing today, if you are to read22 dezembro 2024

Spider-Man: Blue] Jeph Loeb and Tim Sale produced one of the most human and beautiful stories of all time in Spider-Man: Blue. With Tim Sale's passing today, if you are to read22 dezembro 2024 -

Gollum, Wiki22 dezembro 2024

Gollum, Wiki22 dezembro 2024 -

Victoria Justice e Ariana Grande e outras 2 falsas rivalidades22 dezembro 2024

Victoria Justice e Ariana Grande e outras 2 falsas rivalidades22 dezembro 2024 -

FCSB - FC HERMANNSTADT - BUN - Pariuri 1x222 dezembro 2024

FCSB - FC HERMANNSTADT - BUN - Pariuri 1x222 dezembro 2024 -

audiencia johnny depp jason momoa|Pesquisa do TikTok22 dezembro 2024

audiencia johnny depp jason momoa|Pesquisa do TikTok22 dezembro 2024 -

Free hair in roblox 🙂 game:ragdoll engine #roblox #fypppppppppppppppp, ragdoll engine free hair22 dezembro 2024